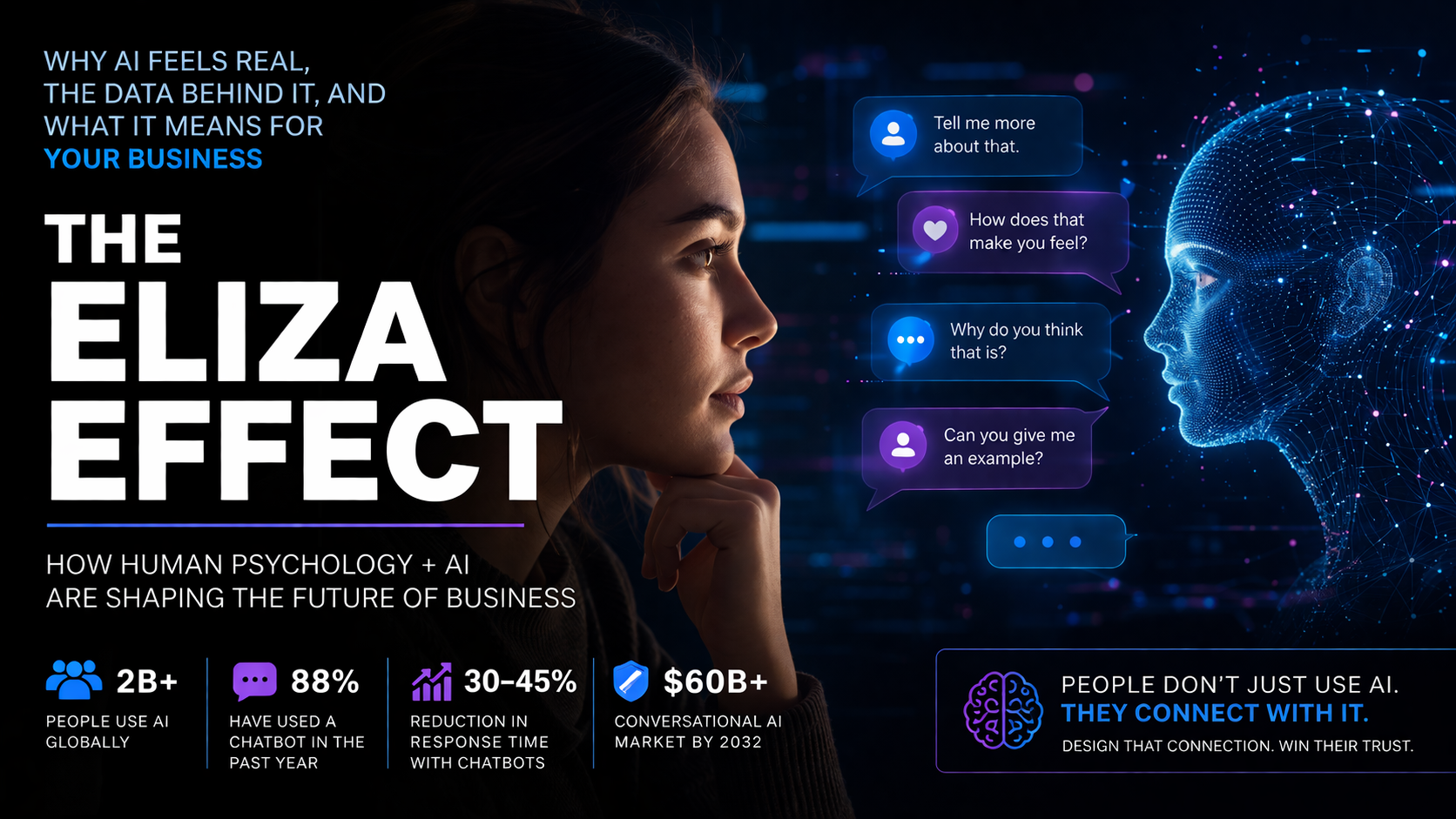

The ELIZA Effect: Why AI Feels Real, The Data Behind It, and What It Means for Your Business

There is a concept from early computing that has quietly become one of the most important forces shaping modern business interaction. It’s called the ELIZA Effect, named after a simple chatbot built in the 1960s that didn’t actually understand anything, yet convinced users that it did. People opened up to it, trusted it, and assigned it intelligence simply because it responded in a human-like way. As research defines it, the ELIZA Effect is the tendency for humans to project understanding and emotion onto machines that simulate conversation well enough. What Harvard-style behavioral thinking highlights here is that intelligence, in the eyes of the user, is not purely about capability. It is about perception shaped through language, tone, and interaction.

What has changed today is not the psychology, but the scale and sophistication. Nearly two billion people now use AI globally and over 88% of people have interacted with a chatbot in the past year, with 65% using them weekly or daily. Inside organizations, 88% of companies report using AI in at least one function, and 78% have implemented conversational AI directly into operations. This is not fringe adoption anymore. This is infrastructure. Even more telling is how deeply it’s embedded into behavior. A Microsoft-backed study analyzing tens of millions of conversations found that AI is now part of “the full texture of human life,” with people using it not just for work, but for relationships, self-improvement, and emotional guidance throughout the day.

This is where the ELIZA Effect becomes real in modern life. It is no longer a lab experiment. It shows up when a customer chats with a support bot at midnight, when a user asks an AI for advice instead of calling someone, or when someone feels understood by a system that is technically just predicting text. In fact, behavior is already shifting in ways that prove the effect is active: 14% of users report skipping a doctor visit after consulting a chatbot, and younger generations are integrating it into daily routines, with about 30% of teens using chatbots every day. At the same time, trust is complicated. While usage is massive, only a small percentage of people fully trust AI outputs, showing that users both rely on and question these systems at the same time. This tension is exactly where the opportunity and risk sit for businesses.

From a Harvard Business School lens, what we are witnessing is the emergence of a new layer in the customer experience: the perceived relationship layer. Traditionally, businesses competed on product, price, and distribution. Then came user experience. Now, conversational systems introduce something new, the ability to simulate understanding at scale. When done correctly, this creates measurable outcomes. Companies using chatbots report 30–45% reductions in response time and up to 30% improvements in issue resolution, which directly ties to customer satisfaction and conversion. But the deeper layer is psychological. When a system responds in a way that feels aligned with a user’s intent or emotion, the user assigns trust faster, stays engaged longer, and moves forward with less friction.

However, the ELIZA Effect also introduces a structural risk that businesses cannot ignore. The same mechanism that builds trust can create overconfidence in the system. Studies show AI can be confidently wrong, particularly in nuanced scenarios, yet users may still rely on it because of how it communicates. This creates a new responsibility: businesses are no longer just designing interfaces, they are designing perceived intelligence. That includes tone, boundaries, and clarity about what the system can and cannot do. High-performing organizations are already adapting to this by redesigning workflows around AI, not just adding it as a feature.

Looking forward, the trajectory is clear. Conversational AI is expected to grow from a $12 billion market to over $60 billion within the next decade, but the more important shift is behavioral, not financial. The likely outcome is that AI becomes the default first interaction layer for most businesses. Not as a replacement for humans, but as the front door to them. The quiet prediction here is that within a few years, customers will judge businesses less by their websites and more by their conversations. The first response they receive, the tone of that response, and whether they feel understood in those first few seconds will become a primary driver of trust and conversion.

The ELIZA Effect, in that sense, is not about machines becoming human. It is about businesses finally having the ability to design how they are perceived at the exact moment a customer reaches out. The companies that win will not be the ones with the most advanced AI, but the ones that understand this simple shift: language creates perception, perception builds trust, and trust drives revenue.